How Serverless Computing (Function-as-a-Service) works and where it is used

Serverless computing and the Function-as-a-Service solutions that work on their basis help developers develop products with a focus on business features. We experimented with these technologies and came to the conclusion that the existing solutions are damp for combat use. Let’s go in order.

The term serverless computing is somewhat misleading – of course, servers remain at the heart of the product, but the developers don’t have to worry about them. At its core, Serverless continues the same virtualization ideas as earlier aaS technologies: allowing the team to focus on code and feature development. If IaaS is an abstraction of equipment, containers are an abstraction of applications, then FaaS is an abstraction of the business logic of service.

The idea is not to pack the application server, database, load balancers into the container. Developers can isolate a function in code, load it on the cloud platform, and run it when needed. Provisioning instances, deploying code and allocating resources, launching web interfaces, monitoring health, ensuring security – all this happens automatically.

FaaS provides maximum flexibility in performance management – during idle time, the function does not consume any resources, and if necessary, the platform quickly allocates capacity, which will be enough for almost any workload. Serves an application of one user or one hundred thousand at once – the performance of a system with FaaS architecture does not actually suffer, and a product with a traditional architecture would certainly have problems.

The team does not worry about the backend and deployment processes. In ideal conditions, the implementation of a new feature is reduced to uploading one function to the server. As a result, development moves faster, Time-to-Market creeps down. And in the company as a whole, the implementation of FaaS helps to develop a platform approach – for Serverless computing, either a pool of cloud resources from a provider or a Kubernetes cluster is needed.

How it works in practice

There is already a whole set of Serverless platforms on the market. We took a close look at two solutions: Lambda from Amazon and KNative. The first is a proprietary service for working with the Amazon cloud, the second runs on top of Kubernetes.

Amazon Lambda is a completely working option with all the capabilities that we talked about above. The platform performs all routine operations with the product, deploys applications, monitors the health and performance of groups of instances, provides fault tolerance and scaling.

The main “but” is a proprietary product, which means you are limited to the Amazon cloud and have to use other products of their ecosystem. If you want to change the platform, you will most likely have to rebuild the product a lot, since the rules can be very different in the new infrastructure.

KNative is a more interesting solution for us since it runs on top of Kubernetes.

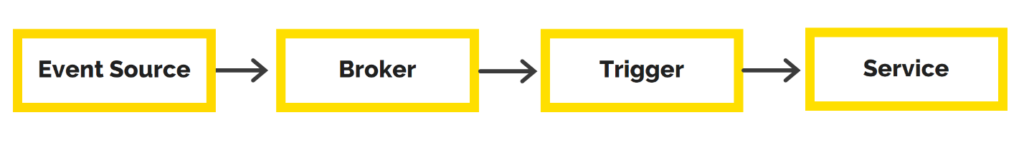

Unlike Lambda, in this case, it uses its own platform, you have to dive deeper into the architecture of the process. It looks like this:

- Event source – the entity of the FaaS platform that interacts with external event sources. The trigger can be an HTTP request, a message from a message broker, an event of the platform itself

- Broker is a “basket” that receives and stores information about events from the Event Source. A broker can be a Kafka module, work in RAM, etc.

- Trigger is a Broker-subscribed component that retrieves messages from the “basket” and passes them on to the Service for execution.

- Service is a work function, isolated business logic.

From the developer’s point of view, the process looks almost the same as with already familiar containerized applications, only the object changes: (1) write a function, (2) pack it into a Docker image, (3) load it.

The main drawback of KNative is that there are no logging and monitoring tools, and this is critically important for FaaS solutions. If your product is divided into functions, without effective monitoring and logging, it is impossible to quickly establish the source of the failure, since you have to look at each function separately.

FaaS benefits

This approach works best when an instant response to the user is not required and when the load can fluctuate from 0 to 100%:

- Tasks that run on a schedule. Export/import operations in financial reporting systems, accounting systems, solutions for creating backup copies.

- Asynchronous sending of notifications to the user (push, email, SMS).

- Machine learning, the Internet of Things, AI systems – all of these industries will appreciate these opportunities. Serverless allows you to perform calculations closer to the endpoint, i.e. to the user. This means that the product has less latency and less data transfer load.

What are the disadvantages of Serverless:

- This architecture is not well suited for long-term processes. If the function is used almost constantly in the application, then the resource consumption will be the same as for traditional products.

- The best platforms at the moment tie a company to a particular cloud provider – be it AWS, Microsoft Azure, or Google Cloud. Kubernetes solutions have yet to grow to this level.

- FaaS is not a “magic pill” with which developers can forget about the infrastructure and just send features to production. You still need to think over the architecture, design functions and their interaction using DDD. Otherwise, the product turns into a mass of strongly interconnected functions that will be difficult to understand. Developers will not be able to deploy such features and change them individually. In the worst case, when processing user requests from the user, all functions will have to be raised.

Our conclusion – before the era of Serverless a few more years

… Provided that the developers will develop this direction, in particular – to develop open-source platforms to the level of the same Amazon Lambda.

The motivation for such projects can be a reduction in resource costs, improved management of large energy-intensive products. But for now, it may be easier for developers to work the old-fashioned way. Possession of Serverless and the ability to use these tools is good baggage, companies should wait a couple of years before using them in combat.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)