How to separate frontend and backend

How to change the architecture of a monolithic product to accelerate its development, and how to divide one team into several, while maintaining consistency of work? For us, the answer to these questions was the creation of a new API. Below you will find a detailed story about the path to such a solution and an overview of the selected technologies, but for a start – a small digression.

A few years ago, I read in a scientific article that more and more time is needed for full-fledged training, and in the near future it will take eighty years to gain knowledge. Apparently, in IT this future has already come.

I was fortunate enough to start programming in those years when there was no division into backend and front-end programmers, when the words “prototype”, “product engineer”, “UX” and “QA” did not exist. The world was simpler, the trees were taller and greener, the air was cleaner and children played in the yards, instead of parking cars. No matter how I want to return at that time, I must admit that all of this is the evolutionary development of society. Yes, society could develop differently, but, as you know, history does not tolerate the subjunctive mood.

Background

BILLmanager appeared just at a time when there was no rigid separation of directions. It had a coherent architecture, was able to control user behavior, and it could even be expanded with plugins. Time passed, the team developed the product, and everything seemed to be fine, but strange phenomena began to be observed. For example, when a programmer was engaged in business logic, he began to make forms poorly, making them inconvenient and difficult to understand. Or adding a seemingly simple functionality took several weeks: architecturally, the modules were tightly coupled, so when changing one, the other had to be adjusted.

Convenience, ergonomics and the global development of the product in general could be forgotten when the application crashed with an unknown error. If earlier the programmer managed to do work in different directions, then with the growth of the product and the requirements for it, this became impossible. The developer saw the whole picture and understood that if the function does not work correctly and stably, then the molds, buttons, tests and promotion will not help. Therefore, he put off everything and sat down to correct the mistake. He made his little feat, which remained unappreciated by anyone (there was simply no more strength for the right supply to the client), but the function began to work. Actually, in order for these small feats to reach customers, the team should include people responsible for different areas: frontend and backend, testing, design, support, promotion.

But that was only the first step. The team has changed, and the architecture of the product has remained technically tightly coupled. Because of this, it was not possible to develop the application at the required pace; when changing the interface, the backend logic had to be changed, although the structure of the data itself often remained unchanged. Something had to be done with all of this.

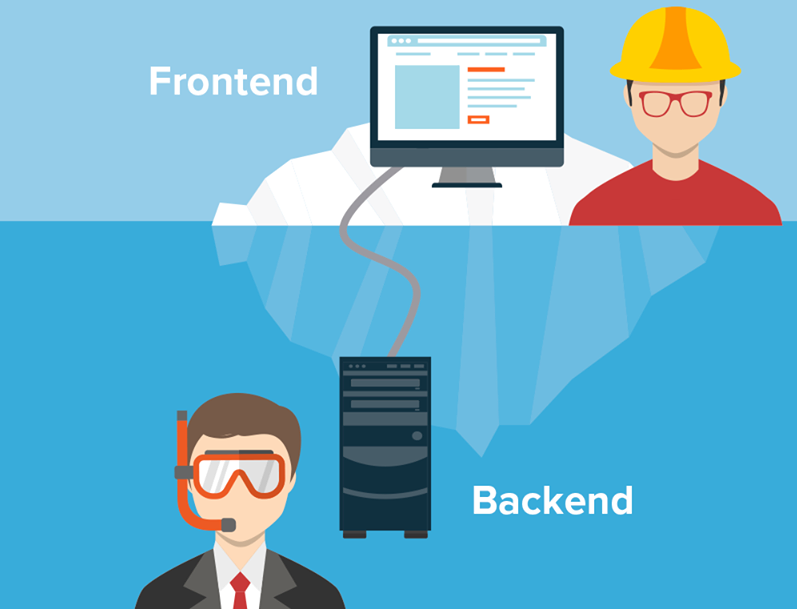

Frontend and backend

To become a professional in everything is long and expensive, therefore the modern world of applied programmers is divided, for the most part, into a front-end and a back-end.

Everything seems to be clear here: we are recruiting front-end programmers, they will be responsible for the user interface, and the backend will finally be able to focus on business logic, data models and other engine hoods. At the same time, the backend, frontend, testers and designers will remain in one team (because they make a common product, they just focus on different parts of it). To be in one team means to have one informational and, preferably, territorial space; discuss new features together and disassemble the finished ones; coordinate work on a big task.

For some abstract new project this will be enough, but we already had the application written, and the volumes of the planned work and the timing of their implementation clearly indicated that one team could not do. There are five people in the basketball team, 11 in the football team, and we had about 30. This did not fit the perfect scrum team of five to nine people. It was necessary to divide, but how to maintain connection? To move forward, it was necessary to solve the architectural and organizational problems.

Architecture

When a product is out of date, it seems logical to abandon it and write a new one. This is a good decision if you can predict the time and it will suit everyone. But in our case, even under ideal conditions, the development of a new product would take years. In addition, the specifics of the application is such that it would be extremely difficult to switch from the old to the new with their complete difference. Backward compatibility is very important for our customers, and if it doesn’t exist, they will refuse to upgrade to the new version. The feasibility of developing from scratch in this case is doubtful. Therefore, we decided to upgrade the architecture of the existing product while maintaining maximum backward compatibility.

Our application is a monolith, the interface of which was built on the server side. The frontend only implemented the instructions received from it. In other words, the front end was responsible for the user interface, not the backend. Architecturally, the front-end and back-end worked as one, therefore, changing one, we were forced to change another. And this is not the worst, which is much worse – it was impossible to develop a user interface without a deep knowledge of what is happening on the server.

It was necessary to separate the frontend and backend, to make separate software applications: the only way to start developing them was at the required pace and volume. But how to do two projects in parallel, change their structure if they are very dependent on each other?

The solution was an additional system – a layer. The idea of the interlayer is extremely simple: it must coordinate the work of the backend and frontend and take on all the additional costs. For example, when the payment function is decomposed on the backend side, the layer combines data, and on the front end side, nothing needs to be changed; or for the conclusion to the dashboard of all the services ordered by the user, we do not do an additional function on the backend, but aggregate data in the layer.

In addition to this, the layer was to add certainty to what can be called from the server and which will eventually return. I wanted the request for operations to be possible without knowing the internal structure of the functions that perform them.

Communications

Due to the strong dependence between the frontend and backend, it was impossible to do work in parallel, which slowed down both parts of the team. Programmatically dividing one large project into several, we got freedom of action in each, but at the same time we needed to maintain consistency in work.

Someone will say that coherence is achieved by increasing soft skills. Yes, they need to be developed, but this is not a panacea. Look at the traffic, it is also important there that the drivers are polite, know how to avoid random obstacles and help each other in difficult situations. But! Without the rules of the road, even with the best communications, we would have accidents at every intersection and the risk of not reaching the place on time.

We needed rules that would be difficult to break. To make it easier to comply them than to violate. But the implementation of any laws carries not only advantages, but also overheads, and we really did not want to slow down the main work, drawing everyone into the process. Therefore, we created a coordination group, and then a team, whose goal was to create conditions for the successful development of different parts of the product. She set up the interfaces that allowed different projects to work as a whole – the very rules that are easier to follow than to break.

We call this command “API”, although the technical implementation of the new API is only a small part of its tasks. As common sections of code are put into a separate function, so the API team parses the general issues of product teams. This is where the connection of our frontend and backend takes place, so the members of this team must understand the specifics of each direction.

Perhaps the “API” is not the best name for the team, something about architecture or large-scale vision would be more suitable, but, I think, this trifle does not change the essence.

API

The function access interface on the server existed in our initial application, but it looked chaotic to the consumer. Separating the frontend and backend needed more certainty.

The goals for the new API have emerged from daily difficulties in implementing new product and design ideas. We needed:

- Weak connectivity of system components to make the backend and frontend develop in parallel.

- High scalability. New API does not interfere with building functionality.

- Stability and consistency.

The search for a solution for the API did not begin with the backend, as is usually , but, on the contrary, thought what users needed.

The most common are all kinds of REST APIs. In recent years, descriptive models have been added to them through tools like swagger, but you need to understand that this is the same REST. And, in fact, its main plus and minus at the same time are the rules, which are exclusively descriptive. This means, no one prohibits the creator of such an API from deviating from REST postulates when implementing individual parts.

Another common solution is GraphQL. It is also not perfect, but unlike REST, the GraphQL API is not just a descriptive model, but real rules.

Earlier, I talked about the system, which was supposed to coordinate the work of the frontend and backend. The interlayer is exactly that intermediate level. Having considered possible options for working with the server, we settled on GraphQL as an API for the frontend. But, since the backend is written in C ++, the implementation of the GraphQL server turned out to be a non-trivial task. I will not describe all the difficulties and tricks that we went to in order to overcome them, it did not bring a real result. We looked at the problem from the other side and decided that simplicity is the key to success. Therefore, we settled on proven solutions: a separate Node.js server with Express.js and Apollo Server.

Next, you had to decide how to access the backend API. At first we looked in the direction of raising the REST API, then we tried to use add-ons in C ++ for Node.js. As a result, we realized that all this did not suit us, and after a detailed analysis for the backend, we chose an API based on gRPC services.

Having gathered together the experience gained in using C ++, TypeScript, GraphQL and gRPC, we got an application architecture that allows you to flexibly develop the backend and frontend, while continuing to create a single software product.

The result is a scheme where the front-end communicates with an intermediate server using GraphQL queries (knows what to ask and what it will get in return). The graphQL server in resolvers calls the API functions of the gRPC server, and for this they use Protobuf schemes for communication. The gRPC-based API server knows which microservice to take data from, or to whom to send the received request. The microservices themselves are also built on gRPC, which ensures the speed of processing requests, typing data and the ability to use various programming languages for their development.

This approach has a number of minuses, the main of which is the additional work of setting up and coordinating circuits, as well as writing auxiliary functions. But these costs will pay off when there are more API users.

Result

We have gone the evolutionary path of developing a product and team. Achieved success or the venture turned into a failure, it is probably early to judge, but intermediate results can be summed up. What we have now:

- The frontend is responsible for the display, and the backend is responsible for the data.

- At the front end, flexibility remained in terms of querying and receiving data. The interface knows what you can ask the server and what answers should be.

- The backend has the opportunity to change the code with confidence that the user interface will continue to work. It became possible to switch to a microservice architecture without the need to redo the entire frontend.

- Now you can use mock data for the frontend when the backend is not ready yet.

- The creation of collaboration schemes eliminated interaction problems when teams understood the same task differently. The number of iterations to redo data formats has been reduced: we act on the principle of “measure seven times, cut once”.

- Now you can plan sprint work in parallel.

- To implement individual microservices, you can now recruit developers who are not familiar with C++.

From all of this, I would call the opportunity to consciously develop the team and the project the main achievement. I think we were able to create conditions in which each participant can more purposefully improve their competencies, focus on tasks and not scatter attention. Everyone is required to work only on their own site, and now it is possible with high involvement and without constant switching. It is impossible to become a professional in everything, but now it is not necessary for us.

The article turned out to be overview and very general. Its goal was to show the path and results of complex research on the topic of how to change the architecture from a technical point of view to continue product development, as well as to demonstrate the organizational difficulties of dividing a team into agreed parts.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)