What or who is DevOps?

In the life of every successful project, comes a moment when the number of servers begins to increase rapidly. The server with the application needs to cope with the load and you have to put into operation several more servers and a balancer in front of them. The database, which previously lived quietly on the server with the application, has grown and needs not just a separate machine, but also another one for reliability and greater speed. The internal team of theorists suddenly heard about microservices and now instead of the problem of a single monolith, appear many micro-problems.

In a very short time, the number of servers has gone far beyond several dozen, each of which must be monitored, logs must be collected from each, and each must be protected from internal (“oh, I accidentally dropped the base”) and external threats.

The zoo of technologies used is growing after each meeting of programmers who want to play with ElasticSearch, Elixir, Go, Lotus and God knows what else.

Progress also does not stand on one place: very seldom there is a month without an important update of the software and operating system used. Yesterday you lived in peace with SysVinit, but now they tell you that you need to use systemd.

If a couple of years ago, several system administrators who skillfully knew bash scripts and manually configured glands could handle all this growth in infrastructure. Now, to manage hundreds of machines, you need to hire a couple of these guys every week. Or look for alternative solutions.

A system administrator, or sysadmin, is a person who is responsible for the upkeep, configuration, and reliable operation of computer systems; especially multi-user computers, such as servers.

All these problems are far from new – skillful system administrators, who used their programming knowledge in time and automated everything to the maximum, for giving the world such tools as, for example, Chef and Puppet. But arose a problem: not all sysadmins were skillful enough to retrain into real engineers of complex infrastructures.

Moreover, programmers, who are still poorly aware of what is happening with their applications after the deployment, persistently continue to consider system administrators guilty of the fact that the new version of the software used the entire CPU and opened the doors for wide open hackers in the world. “My code is perfect, it’s a hell of a shit on your server,” they said.

In such a difficult situation, professionals had to engage in educational activities. And how can be educational activities without a buzzword? So appeared DevOps – a marketing term about “culture within the organization”.

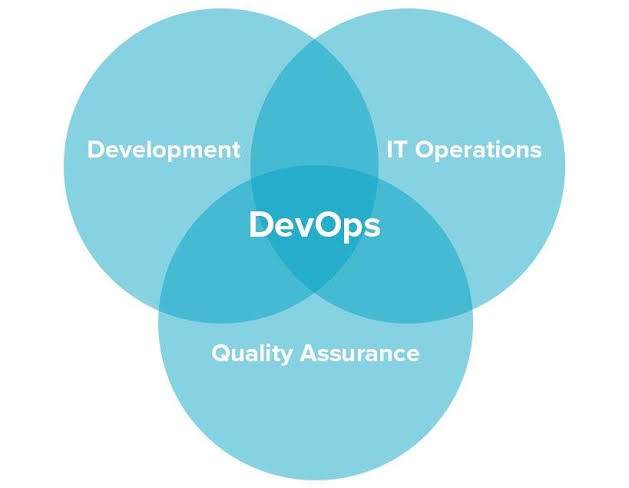

Actually, DevOps had nothing to do with a specific position in the organization. Many still claim that DevOps is a culture, not a profession, according to which communication between developers and system administrators should be established as closely as possible.

The developer should have an idea of the infrastructure and be able to understand why a new feature working on a laptop suddenly made half of the data center fail. Such knowledge helps to avoid conflicts: a programmer who is aware of how the server works will never put the blame on the system administrator.

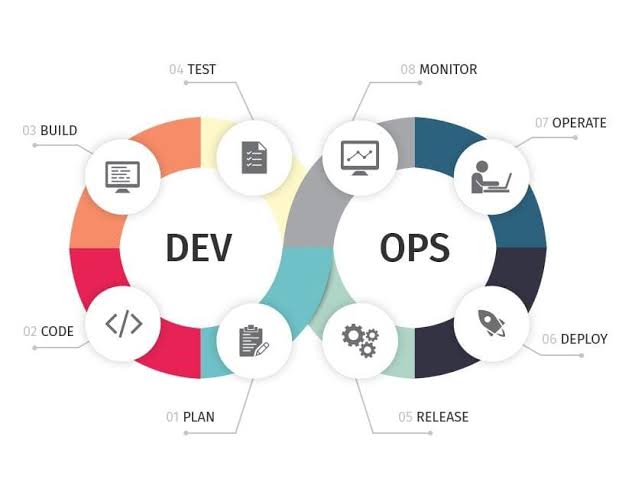

Also DevOps include topics such as Continuous Integration, Continuous Delivery, etc.

Naturally, DevOps moved from the category of “culture” and “ideology” to the category of “profession”. The growth of vacancies with this word inside is growing rapidly. But what do recruiters and companies expect from a DevOps engineer? Often, a mixture of such skills as system administration, programming, the use of cloud technologies and automation of large infrastructure.

This means that you need not only to be a good programmer, but also ideally understand how networks, operating systems, virtualization work, how to ensure security and fault tolerance, as well as several dozen different technologies, from basic and time-tested things like iptables and SELinux and ending with the more recent and fashionable previously mentioned technologies like Chef, Puppet or even Ansible.

Here an attentive reader-programmer will say:

It is foolish to expect that a programmer who already has so many tasks for the project will also study tons of new things related to the infrastructure and architecture of the system as a whole.

Another attentive sysadmin reader will say:

I am very good at rebuilding the Linux kernel and setting up the network, why do I need to learn how to program, why do I need your Chef, git and other strange things?

We will say this: a true engineer should not be able to know Ruby, Go, Bash or “configure the network”, but be able to build complex, beautiful, automated and secure systems, and understand how they work from the lowest level up to the generation and delivery of HTML pages in the browser.

Of course, we partially agree that you cannot be an absolute professional in all areas of IT. But DevOps is not only about people who know how to do everything well. It’s also about maximizing the elimination of illiteracy on both sides of the barricades (actually being one team), whether you are tired of manual work as a system administrator or an AWS developer.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)