How I transferred my hobby project to k8s

In this article, I would like to talk about my hobby project of search and classification of ads for renting apartments and the experience of moving it to k8s.

Table of contents

- A little bit about the project

- Introducing k8s

- Preparing for the move

- K8s configuration development

- K8s cluster deployment

A little bit about the project

In March 2017, I launched a service for parsing and classification of ads for renting apartments from the social network VKontakte.

The development of the first version of the service took about a year. To deploy each component of the service, I wrote scripts in Ansible. From time to time, the service did not work due to errors in the redesigned code or incorrect configuration of components.

Around June 2019, an error was detected in the parser code due to which new announcements were not collected. Instead of another correction, it was decided to temporarily disable it.

The reason for the service restoration was the study of k8s.

Introducing k8s

k8s is open source software for automating the deployment, scaling and management of containerized applications.

The entire service infrastructure is described by configuration files in the yaml format (most often).

I will not talk about the internal structure of k8s, but only give some information about some of its components.

K8s components

Pod is the smallest unit. It may contain several containers that will be launched on the same node.

Containers Inside Pod:

- have a common network and can access each other through 127.0.0.1:$containerPort;

- do not have a common file system, so you cannot directly write files from one container to another.

Deployment – monitors the work of Pod. It can raise the required number of Pod instances, restart them if they fall, and perform the deployment of new Pods.

PersistentVolumeClaim – data warehouse. By default, it works with the local node file system. Therefore, if you want two different Pods on different nodes to have a common file system, then you have to use a network file system like Ceph.

Service – proxies requests to and from Pod.

Service Types:

- LoadBalancer – for interaction with an external network with load balancing between several Pods;

- NodePort (only 30000-32767 ports) – for interaction with an external network without load balancing;

- ClusterIp – for interaction in the local network of the cluster;

- ExternalName – for interaction between Pod and external services.

ConfigMap – storage of configs.

In order for k8s to restart Pod with new configs when ConfigMap changes, you should indicate the version in the name of your ConfigMap and change it every time ConfigMap changes.

The same goes for Secret.

Secret – storage of secret configs (passwords, keys, tokens).

Label – key / value pairs that are assigned to k8s components, for example, Pod.

At the beginning of acquaintance with k8s, it may not be completely clear how to use Labels.

Preparing for the move

Functionality trimming

To make the service more stable and predictable, we had to remove all the additional components that worked poorly and rewrite the main ones a bit.

So, I decided to refuse:

- parsing code for sites other than VK;

- request proxy component;

- component of notifications of new announcements in VKontakte and Telegram.

Service Components

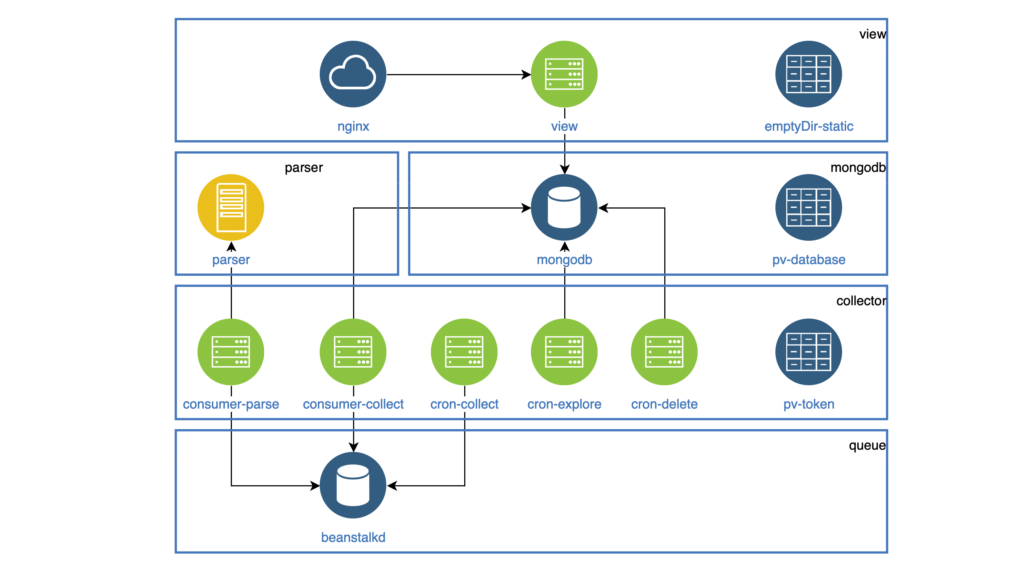

After all the changes, the service from the inside began to look like this:

- view – search and display of ads on the site (NodeJS);

- parser – ad classifier (Go);

- collector – collecting, processing and deleting ads (PHP):

- cron-explore – a console team that is looking for groups on VKontakte to rent out housing;

- cron-collect – a console command that goes to groups compiled by cron-explore and collects the ads themselves;

- cron-delete – a console command that deletes expired announcements;

- consumer-parse – the queue handler, which receives jobs from cron-collect. It classifies ads using the parser component;

- consumer-collect – the queue handler that gets jobs from consumer-parse. It filters out bad and duplicate ads.

Build Docker Images

In order to manage the components and monitor them in a single style, I decided:

- put component configuration into env variables,

- write logs in stdout.

There is nothing specific in the images themselves.

K8s configuration development

So I got the components in the Docker images, and started developing the k8s configuration.

All components that work as daemons are highlighted in Deployment. Each daemon must be accessible within the cluster, so everyone has a Service. All tasks that must be performed periodically work in CronJob.

All statics (pictures, js, css) are stored in the view container, and the Nginx container should distribute it. Both containers are in one Pod. The file system in Pod is not fumbling, but at the start of Pod you can copy all the statics to the emptyDir folder common to both containers. This folder will be shared for different containers, but only inside one Pod.

The collector component is used in Deployment and CronJob.

All these components access the VKontakte API and must store the shared access token somewhere.

For this, I used PersistentVolumeClaim, which I connected to each Pod. Such a folder will be shared for different Pods, but only inside one node.

PersistentVolumeClaim is also used to store database data. As a result, we got such a scheme (Pods of one component are collected in blocks):

K8s cluster deployment

To get started, I deployed the cluster locally using Minikube.

Of course, there were some mistakes, so the commands helped me a lot.

|

1 2 |

kubectl logs -f pod-name kubectl describe pod pod-name |

After I learned how to deploy a cluster in Minikube, it was not difficult for me to deploy it in DigitalOcean.

In conclusion, I can say that the service has been stable for 2 months.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)