GitLab Shell Runner. Competitive launch of test services using Docker Compose

This article will be interesting for both testers and developers, but it is aimed more at automations who are faced with the problem of configuring GitLab CI / CD for integration testing in conditions of insufficient infrastructure resources and / or lack of a container orchestration platform. I’ll tell you how to configure the deployment of test environments using Docker compose on a single GitLab shell runner so that during deploying multiple environments, the services being launched do not interfere with each other.

Preconditions

In my practice, it often happened to “heal” integration testing on projects. And often the first and most significant problem is the CI pipeline, in which integration testing of the developed service (s) is carried out in a dev / stage environment. This caused quite a few problems:

- Due to defects in a particular service, the test circuit may be corrupted by broken data during integration testing. There were cases in which sending a request with a broken JSON format damaged a service, which made the stand completely non-working.

- Slowing down the operation of the test circuit with the growth of test data. I think it makes no sense to describe an example with cleaning / rolling back a database. In my practice, I have not met a project where this procedure would go smoothly.

- Risk of disruption of the test circuit while testing general system settings. For example, user / group / password / application policy.

- Test data from autotests is disturbing manual testers.

Someone will say that good autotests should clean the data after themselves. I have arguments against:

- Dynamic stands are very convenient to use.

- Not every object can be deleted from the system through the API. For example, a call to delete an object is not implemented, because it contradicts business logic.

- When creating an object through the API, can be created a huge amount of metadata, which is problematic to delete.

- If the tests are interdependent, then the data cleaning process after the tests are completed turns into a headache.

- Additional (and, in my opinion, not always needed) calls to the API.

And the main argument: when the test data begins to clean directly from the database. It turns into a real circus! From the developers you can hear: “I only added / deleted / renamed the table, why did 100500 integration tests fall?”

In my opinion, the most optimal solution is a dynamic environment.

Many people use docker-compose to run a test environment, but few use docker-compose when conducting integration testing in CI / CD. And here I do not take into account kubernetes, swarm and other container orchestration platforms. Not every company has them. It would be nice if docker-compose.yml was universal.

- Even if we have our own QA runner, how can we make sure that services launched via docker-compose do not interfere with each other?

- How to collect logs of tested services?

- How to clean the runner?

I have my own GitLab runner for my projects and I came across these issues while developing a Java client for TestRail. Or rather, when running integration tests. So, we will solve these issues with examples from this project.

Gitlab shell runner

For the runner, I recommend a Linux virtual machine with 4 vCPU, 4 GB RAM, 50 GB HDD.

In the Internet is a lot of information about configuring gitlab-runner, so shortly:

- We go to the machine on SSH

- If you have less than 8 GB of RAM, then I recommend making a swap of 10 GB to don’t make the OOM killer come and kill us due to lack of RAM. This can happen when more than 5 tasks are started simultaneously. Tasks will be slower, but stable.

- Install gitlab-runner, docker, docker-compose, make.

- Add user gitlab-runner to the docker group

|

1 2 |

sudo groupadd docker sudo usermod -aG docker gitlab-runner |

- Register gitlab-runner.

- Open for editing /etc/gitlab-runner/config.toml and add

|

1 2 3 |

concurrent=20 [[runners]] request_concurrency = 10 |

This will allow you to run parallel tasks on the same runner.

If your machine is more powerful, for example, 8 vCPU, 16 GB RAM, then these numbers can be at least 2 times larger. But it all depends on what exactly will be launched on this runner and in what quantity.

It’s enough.

Preparing of docker-compose.yml

The main task is docker-compose.yml, which will be used both locally and in the CI pipeline.

The variable COMPOSE_PROJECT_NAME will be used to start several instances of the environment.

An example of my docker-compose.yml:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 |

version: "3" volumes: static-content: services: db: image: mysql:5.7.22 environment: MYSQL_HOST: db MYSQL_DATABASE: mydb MYSQL_ROOT_PASSWORD: 1234 SKIP_GRANT_TABLES: 1 SKIP_NETWORKING: 1 SERVICE_TAGS: dev SERVICE_NAME: mysql migration: image: registry.gitlab.com/touchbit/image/testrail/migration:latest links: - db depends_on: - db fpm: image: registry.gitlab.com/touchbit/image/testrail/fpm:latest container_name: "testrail-fpm-${CI_JOB_ID:-local}" volumes: - static-content:/var/www/testrail links: - db web: image: registry.gitlab.com/touchbit/image/testrail/web:latest ports: - ${TR_HTTP_PORT:-80}:80 - ${TR_HTTPS_PORT:-443}:443 volumes: - static-content:/var/www/testrail links: - db - fpm |

Makefile Preparation

I use Makefile, as it is very convenient both for local management of the environment, and in CI.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 |

ifeq ($(CI_JOB_ID),) CI_JOB_ID := local endif export COMPOSE_PROJECT_NAME = $(CI_JOB_ID)-testrail docker-compose -f .indirect/docker-compose.yml down docker-up: docker-down docker-compose -f .indirect/docker-compose.yml pull docker-compose -f .indirect/docker-compose.yml up --force-recreate --renew-anon-volumes -d docker-logs: mkdir -p ./logs docker logs $${COMPOSE_PROJECT_NAME}_web_1 >& logs/testrail-web.log || true docker logs $${COMPOSE_PROJECT_NAME}_fpm_1 >& logs/testrail-fpm.log || true docker logs $${COMPOSE_PROJECT_NAME}_migration_1 >& logs/testrail-migration.log || true docker logs $${COMPOSE_PROJECT_NAME}_db_1 >& logs/testrail-mysql.log || true docker-clean: @echo docker kill $$(docker ps --filter=name=testrail -q) || true @echo docker rm -f $$(docker ps -a -f --filter=name=testrail status=exited -q) || true @echo docker rmi -f $$(docker images -f "dangling=true" -q) || true @echo docker rmi -f $$(docker images --filter=reference='registry.gitlab.com/touchbit/image/testrail/*' -q) || true @echo docker volume rm -f $$(docker volume ls -q) || true @echo docker network rm $(docker network ls --filter=name=testrail -q) || true docker ps |

Preparing of .gitlab-ci.yml

Run of integration tests

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

Integration: stage: test tags: - my-shell-runner before_script: - docker login -u gitlab-ci-token -p ${CI_JOB_TOKEN} ${CI_REGISTRY} - export TR_HTTP_PORT=$(shuf -i10000-60000 -n1) - export TR_HTTPS_PORT=$(shuf -i10000-60000 -n1) script: - make docker-up - java -jar itest.jar --http-port ${TR_HTTP_PORT} --https-port ${TR_HTTPS_PORT} - docker run --network=testrail-network-${CI_JOB_ID:-local} --rm itest after_script: - make docker-logs - make docker-down artifacts: when: always paths: - logs expire_in: 30 days |

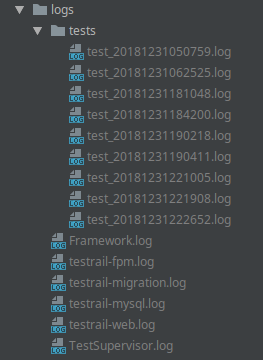

As a result of launching such a task in the artifacts, the logs directory will contain the logs of services and tests. Which is very convenient in case of errors. Each of my tests in parallel writes its own log, but I will talk about this separately.

Runner Cleaning

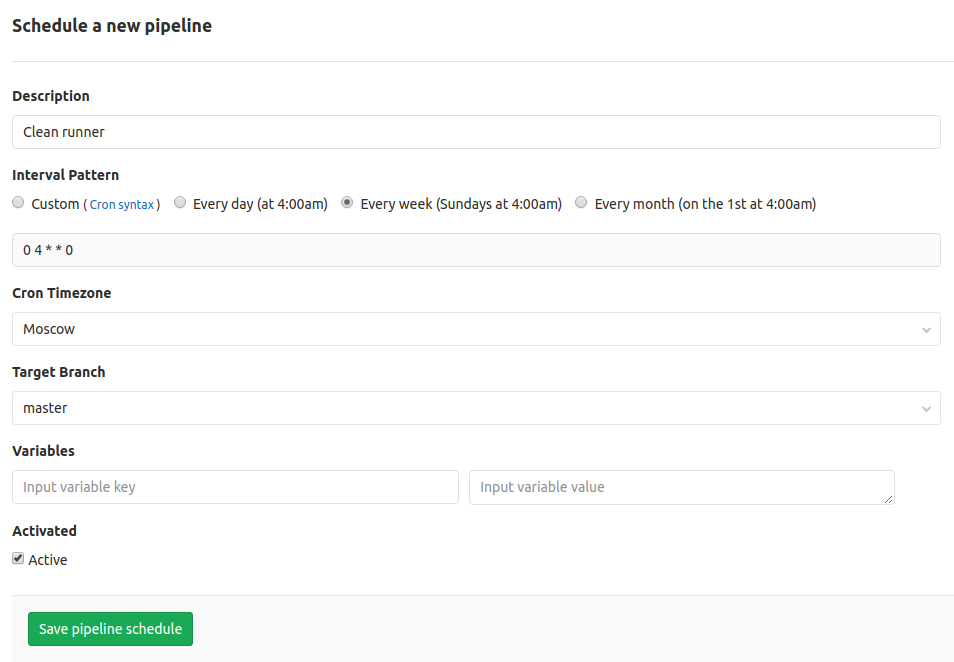

The task will be launched only on schedule.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

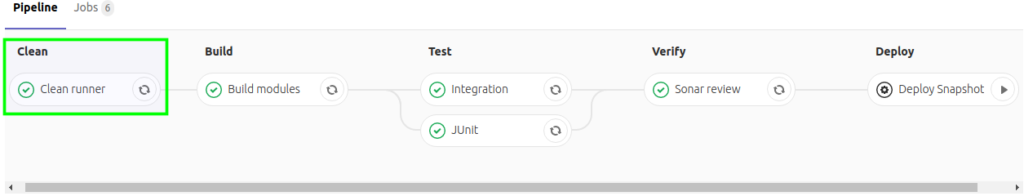

stages: - clean - build - test Clean runner: stage: clean only: - schedules tags: - my-shell-runner script: - make docker-clean |

Next, go to our GitLab project -> CI / CD -> Schedules -> New Schedule and add a new schedule.

Result

Run 4 tasks in GitLab CI.

In the logs of the last task with integration tests, we see containers from different tasks.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 |

CONTAINER ID NAMES c6b76f9135ed 204645172-testrail-web_1 01d303262d8e 204645172-testrail-fpm_1 2cdab1edbf6a 204645172-testrail-migration_1 826aaf7c0a29 204645172-testrail-mysql_1 6dbb3fae0322 204645084-testrail-web_1 3540f8d448ce 204645084-testrail-fpm_1 70fea72aa10d 204645084-testrail-mysql_1 d8aa24b2892d 204644881-testrail-web_1 6d4ccd910fad 204644881-testrail-fpm_1 685d8023a3ec 204644881-testrail-mysql_1 1cdfc692003a 204644793-testrail-web_1 6f26dfb2683e 204644793-testrail-fpm_1 029e16b26201 204644793-testrail-mysql_1 c10443222ac6 204567103-testrail-web_1 04339229397e 204567103-testrail-fpm_1 6ae0accab28d 204567103-testrail-mysql_1 b66b60d79e43 204553690-testrail-web_1 033b1f46afa9 204553690-testrail-fpm_1 a8879c5ef941 204553690-testrail-mysql_1 069954ba6010 204553539-testrail-web_1 ed6b17d911a5 204553539-testrail-fpm_1 1a1eed057ea0 204553539-testrail-mysql_1 |

It seems everything is beautiful, but there is a nuance. Pipeline can be forced to cancel during the integration tests, in which case running containers will not be stopped. From time to time you need to clean the runner. Unfortunately, the revision task in GitLab CE is still in Open status.

But we have added the scheduled task launch, and no one forbids us to start it manually.

Go to our project -> CI / CD -> Schedules and run the Clean Runner task:

Total:

- We have one shell runner.

- There are no conflicts between tasks and the environment.

- We have a parallel launch of tasks with integration tests.

- You can run integration tests both locally and in the container.

- Service and test logs are collected and attached to the pipeline task.

- It is possible to clean the runner from old docker-images.

Setup time is ~ 2 hours.

We will be glad to get you feedback, for this don’t hesitate to contact us.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)