Docker: NOT useless advices

In comments to our article “Docker: bad advices” there were many requests to explain why the Dockerfile described in it is so terrible.

Summary of the previous story: two developers in a hard deadline made the Dockerfile. In the process, Ops – let’s call him Martin comes to them. The resulting Dockerfile is so bad that the he is on the verge of a heart attack.

Now let’s figure out what’s wrong with this Dockerfile.

So, a week has passed.

Developer Peter meets in the dining room with a cup of coffee with Ops.

P: Mr. Martin, are you very busy? I would like to figure out what we made wrong.

M: This is good, I do not often meet developers who are interested in operation.

To begin, let’s agree on some things:

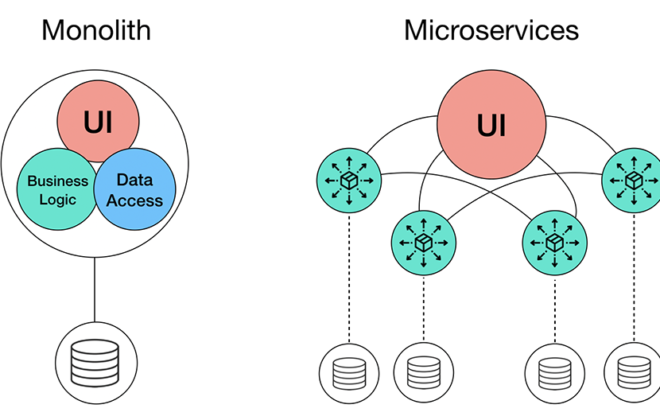

- Docker ideology: one container – one process.

- The smaller the container, the better.

- The more cache is taken, the better.

P: But why should there be one process in one container?

M: Docker, when the container starts, monitors the status of the process with pid 1. If the process dies, Docker tries to restart the container. Suppose you have several applications running in the container or the main application is not launched with pid 1. If the process dies, Docker will not know about it.

If there are no more questions, show your Dockerfile.

And Peter showed:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 |

FROM ubuntu:latest COPY ./ /app WORKDIR /app RUN apt-get update RUN apt-get upgrade RUN apt-get -y install libpq-dev imagemagick gsfonts ruby-full ssh supervisor RUN gem install bundler RUN curl -sL https://deb.nodesource.com/setup_9.x | sudo bash - RUN apt-get install -y nodejs RUN bundle install --without development test --path vendor/bundle RUN rm -rf /usr/local/bundle/cache/*.gem RUN apt-get clean RUN rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* RUN rake assets:precompile CMD ["/app/init.sh"] |

M: Oh, let’s check it in order. Let’s start with the first line:

|

1 |

ROM ubuntu:latest |

You take the latest tag. Using the latest tag leads to unpredictable consequences. Imagine that the image maintainer is building a new version of the image with a different list of software, this image gets the latest tag. And at best your container stops collecting, and at worst, you catch bugs that did not exist before.

You take an image with a full OS with a lot of unnecessary software, which makes the container bigger. And the more software, the more holes and vulnerabilities.

In addition, the larger the image, the more it takes up space on the host and in the registry (do you store images somewhere)?

P: Yes, of course, we have a registry, and you set it up.

M: So, what am I talking about? .. Oh yes, volumes … The network load is also growing. For a single image, this is invisible, but when there is continuous assembly, tests and deployment, it is obvious. And if you don’t have God’s mode on AWS, you’ll also receive an incredible bill.

Therefore, you need to choose the most suitable image, with the exact version and a minimum of software. For example, take: FROM ruby: 2.5.5-stretch

P: Oh, I see. And how and where to see the available images? How to understand which one I need?

M: Usually, images are taken from a dockhub, do not confuse with a pornhub :). There are usually several assemblies for an image:

- Alpine: Images are compiled on a minimalistic Linux image, just 5 MB. Its minus: it is built with its own implementation of libc, standard packages in it do not work. Finding and installing the right package will take a lot of time.

- Scratch: basic image, not used to build other images. It is intended solely to run binary, prepared data. Ideal for running binary applications, which include everything you need, such as go-applications.

Based on any OS, such as Ubuntu or Debian. Well, here, I think, no need to explain.

M: Now we need to put all the extra packages and clean the caches. And immediately you can make an apt-get upgrade. Otherwise, with each assembly, despite the fixed tag of the basic image, will be obtained different images. Updating packages in an image is the maintainer’s task, it is accompanied by a tag change.

P: Yes, I tried to do it, it turned out like this:

|

1 2 3 4 5 6 7 8 9 10 11 |

WORKDIR /app COPY ./ /app RUN curl -sL https://deb.nodesource.com/setup_9.x | bash - \ && apt-get -y install libpq-dev imagemagick gsfonts ruby-full ssh supervisor nodejs \ && gem install bundler \ && bundle install --without development test --path vendor/bundle RUN rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* |

M: Not bad, but there is also something to work on. Look, here is this command:

|

1 2 3 |

RUN rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* |

… does not delete data from the final image, but only creates an additional layer without this data. Right is like this:

|

1 2 3 4 5 6 7 |

RUN curl -sL https://deb.nodesource.com/setup_9.x | bash - \ && apt-get -y install libpq-dev imagemagick gsfonts nodejs \ && gem install bundler \ && bundle install --without development test --path vendor/bundle \ && rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* |

But that’s not all. What do you have there, Ruby? Then it is not necessary to copy the entire project at the beginning. Just copy Gemfile and Gemfile.lock.

With this approach, bundle install will not be executed for every change in the source, but only if Gemfile or Gemfile.lock has changed.

The same methods work for other languages with the dependency manager, such as npm, pip, composer and others based on the file with the list of dependencies.

And finally, remember, at the beginning I talked about the Docker ideology “one container – one process”? This means that a supervisor is not needed. Also, do not install systemd, for the same reasons. In fact, Docker itself is a supervisor. And when you try to run several processes in it, it is like launching several applications in one supervisor process.

During assembly, you will make a single image, and then run the desired number of containers so that each process has one process.

But about this later.

P: I think, I understand. Look, how it is now:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

FROM ruby:2.5.5-stretch WORKDIR /app COPY Gemfile* /app RUN curl -sL https://deb.nodesource.com/setup_9.x | bash - \ && apt-get -y install libpq-dev imagemagick gsfonts nodejs \ && gem install bundler \ && bundle install --without development test --path vendor/bundle \ && rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* COPY . /app RUN rake assets:precompile CMD ["bundle”, “exec”, “passenger”, “start"] |

Will the daemons be redefined upon the container start?

M: Yes, that’s right. By the way, you can use both CMD and ENTRYPOINT. And to understand what is the difference, this is your homework.

So come on. You download the file to install node, but there is no guarantee that it will have what you need. It is necessary to add validation. For example, like this:

|

1 2 3 4 5 6 7 8 9 10 |

RUN curl -sL https://deb.nodesource.com/setup_9.x > setup_9.x \ && echo "958c9a95c4974c918dca773edf6d18b1d1a41434 setup_9.x" | sha1sum -c - \ && bash setup_9.x \ && rm -rf setup_9.x \ && apt-get -y install libpq-dev imagemagick gsfonts nodejs \ && gem install bundler \ && bundle install --without development test --path vendor/bundle \ && rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* |

Using the checksum, you can verify that you downloaded the correct file.

P: But if the file changes, then the assembly will not work.

M: Yes, but this is also a plus. You will find out that the file has changed, and you can see what was changed there. You never know, what somebody added to it.

P: Thank you. It turns out that the final Dockerfile will look like this:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

FROM ruby:2.5.5-stretch WORKDIR /app COPY Gemfile* /app RUN curl -sL https://deb.nodesource.com/setup_9.x > setup_9.x \ && echo "958c9a95c4974c918dca773edf6d18b1d1a41434 setup_9.x" | sha1sum -c - \ && bash setup_9.x \ && rm -rf setup_9.x \ && apt-get -y install libpq-dev imagemagick gsfonts nodejs \ && gem install bundler \ && bundle install --without development test --path vendor/bundle \ && rm -rf /usr/local/bundle/cache/*.gem \ && apt-get clean \ && rm -rf /var/lib/apt/lists/* /tmp/* /var/tmp/* COPY . /app RUN rake assets:precompile CMD ["bundle”, “exec”, “passenger”, “start"] |

P: Mr. Martin, thanks for the help. It’s time for me to go, I need to make 10 more commits for today.

Martin, stopping his hasty colleague with a look, takes a sip of strong coffee. After thinking for a few seconds about the 99.9% SLA and code without bugs, he asks a question.

M: Where do you keep the logs?

P: Of course, in production.log. By the way, yes, how can we access them without ssh?

M: If you leave them in the files, a solution has already been invented for you. The docker exec command allows you to execute any command in a container. For example, you can make cat for logs. And using the -it switch and running bash (if installed in the container), you will get interactive access to the container.

But you should not store logs in files. At a minimum, this leads to uncontrolled growth of the container, but no one rotates the logs. All logs need to be put in stdout. There you can already see them using the docker logs command.

P: Mr. Martin, but can put the logs into the separate directory, on the physical node, as user data?

M: It’s good that you didn’t forget to remove the data downloaded to the node disk. With logs, this is also possible, just remember to set up the rotation.

That’s it, you can run.

P: Mr. Martin, but advise what to read?

M: To get started, read the recommendations from the Docker developers, anyone knows Docker better than them.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)