Apache Kafka + Spring Boot: Hello, microservices

this post, we will write an application on Spring Boot 2 using Apache Kafka under Linux, from installing the JRE to a working microservice application.

Colleagues from the front-end development department who saw the article complain that I am not explaining what Apache Kafka and Spring Boot are. I believe that anyone who needs to assemble a finished project using the above technologies knows what it is and why they need it.

We can do without lengthy explanations of what Kafka, Spring Boot and Linux are, and instead, run the Kafka server from scratch on a Linux machine, write two microservices and make one of them send messages to the other – in general, configure full microservice architecture.

The post will consist of two articles. In the first we configure and run Apache Kafka on a Linux machine, in the second we write two microservices in Java.

In the startup, in which I started my professional career as a programmer, there were microservices on Kafka, and one of my microservices also worked with others through Kafka, but I did not know how the server itself worked, whether it was written as an application or is it already completely boxed product. What was my surprise and disappointment when it turned out that Kafka was still a boxed product, and my task would be not only to write a client in Java (what I love to do), as well as deploy and configure the finished application as DevOps (what I hate to do). However, even if I could raise it on the Kafka virtual server in less than a day, it is really quite simple to do this. So.

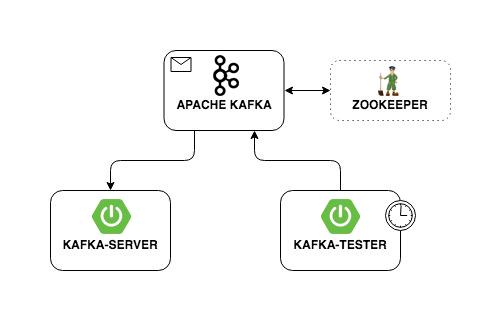

Our application will have the following interaction structure:

Deploy Apache Kafka + Zookeeper on a virtual machine

I tried to raise Kafka on local Linux, on a Mac and on remote Linux. In two cases (Linux), I succeeded quite quickly. With Mac yet nothing happened. Therefore, we will raise Kafka on Linux. I chose Ubuntu 18.04.

In order for Kafka to work, it needs a Zookeeper. To do this, you must download and run it before launching Kafka.

So.

0. Install JRE

This is done by the following commands:

|

1 2 3 |

sudo apt-get update sudo apt-get install default-jre |

If everything went ok, then you can enter the command

|

1 |

java-version |

and make sure Java is installed.

1. Download Zookeeper

I don’t like magic teams on Linux, especially when they just give a few commands and it is not clear what they are doing. Therefore, I will describe each action – what exactly it does. So, we need to download Zookeeper and unzip it to a convenient folder. It is advisable if all applications are stored in the / opt folder, that is, in our case, it will be / opt / zookeeper.

I used the command below. If you know other Linux commands that you think will allow you to do this, use them. I’m a developer, not a DevOps, and I communicate with servers at the other level. So, download the application:

wget -P / home / xpendence / downloads / “http://apache-mirror.rbc.ru/pub/apache/zookeeper/zookeeper-3.4.12/zookeeper-3.4.12.tar.gz”

The application is downloaded to the folder that you specify, I created the folder / home / xpendence / downloads to download there all the applications I need.

2. Unzip Zookeeper

I used the command:

|

1 2 |

tar -xvzf /home/xpendence/downloads/zookeeper-3.4.12.tar.gz |

This command unpacks the archive into the folder in which you are located. You may then need to transfer the application to / opt / zookeeper. And you can immediately go into it and from there already unpack the archive.

3. Edit settings

In the folder / zookeeper / conf / there is a file zoo-sample.cfg, I propose to rename it to zoo.conf, it is this file that the JVM will look for a startup. The following should be added to this file at the end:

|

1 |

tickTime = 2000 |

|

1 |

dataDir = / var / zookeeper |

|

1 |

clientPort = 2181 |

Also, create the /var / zookeeper directory.

4. Launch Zookeeper

Go to the / opt / zookeeper folder and start the server with the command:

|

1 2 |

bin / zkServer.sh start |

“STARTED” should appear.

After this, I propose to check that the server is working. We write:

|

1 2 |

telnet localhost 2181 |

A message should appear that the connection was successful. If you have a weak server and the message did not appear, try again – even when STARTED appears, the application starts listening to the port much later. When I tested all of this on a weak server, it happened to me every time. If everything is connected, enter the command

|

1 |

ruok |

What does it mean: “Are you ok?” The server should respond:

|

1 2 |

imok (I'm ok!) |

and disconnect. So, everything is according to plan. We proceed to launch Apache Kafka.

5. We create the user under Kafka

To work with Kafka we need a separate user.

|

1 2 |

sudo adduser --system --no-create-home --disabled-password --disabled-login kafka |

6. Download Apache Kafka

There are two distributions – binary and sources. We need a binary. Looks like the archive with the binary is different in size. The binary has 59 MB; sources 6.5 MB.

Download the binary to the directory there, using the link below:

|

1 2 3 |

wget -P / home / xpendence / downloads / "http://mirror.linux-ia64.org/apache/kafka/2.1.0/kafka_2.11-2.1.0.tgz" |

7. Unzip Apache Kafka

The unpacking procedure is no different from the same for Zookeeper. We also unpack the archive into the / opt directory and rename it to kafka so that the path to the / bin folder is / opt / kafka / bin

|

1 2 |

tar -xvzf /home/xpendence/downloads/kafka-2.1.0-src.tgz |

8. Edit settings

Settings are in /opt/kafka/config/server.properties. Add one line:

|

1 2 |

delete.topic.enable = true |

This setting seems to be optional, it works without it. This setting allows you to delete topics. Otherwise, you simply cannot delete topics through the command line.

9. We give access to Kafka directories the user kafka

|

1 2 |

chown -R kafka: nogroup / opt / kafka |

|

1 2 |

chown -R kafka: nogroup / var / lib / kafka |

10. The long-awaited launch of Apache Kafka

We enter the command, after which Kafka should start:

|

1 2 |

/opt/kafka/bin/kafka-server-start.sh /opt/kafka/config/server.properties |

If the usual actions (Kafka is written in Java and Scala) did not spill over into the log, then everything worked and you can test our service.

10.1. Weak server issues

For experiments on Apache Kafka, I took a weak server with one core and 512 MB of RAM, which turned out to be a few problems for me.

Out of memory. Of course, you cannot overclock with 512 MB, and the server could not deploy Kafka due to lack of memory. The fact is that by default Kafka consumes 1 GB of memory.

We go to kafka-server-start.sh, zookeeper-server-start.sh. There is already a line that regulates memory:

|

1 2 |

export KAFKA_HEAP_OPTS = "- Xmx1G -Xms1G" |

Change it to:

|

1 2 |

export KAFKA_HEAP_OPTS = "- Xmx256M -Xms128M" |

This will reduce the appetite of Kafka and allow you to start the server.

The second problem with a weak computer is the lack of time to connect to Zookeeper. By default, this is given 6 seconds. If the hardware is weak, this, of course, is not enough. In server.properties, we increase the connection time to the zukipper:

zookeeper.connection.timeout.ms = 30000

I set half a minute.

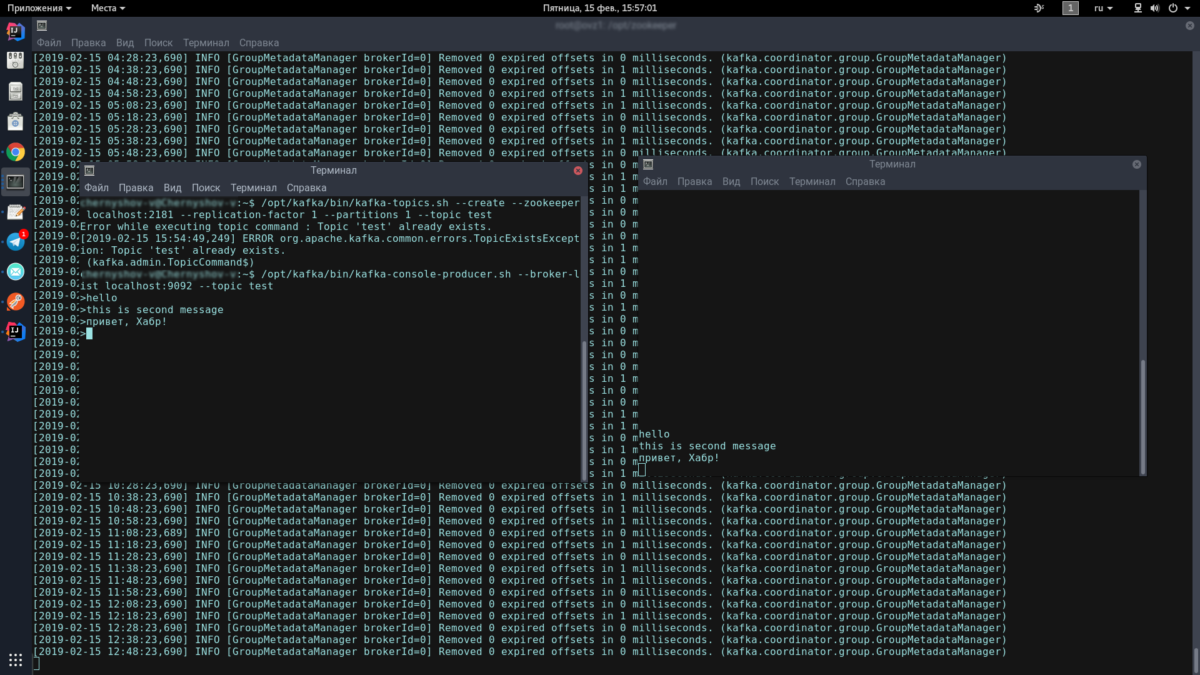

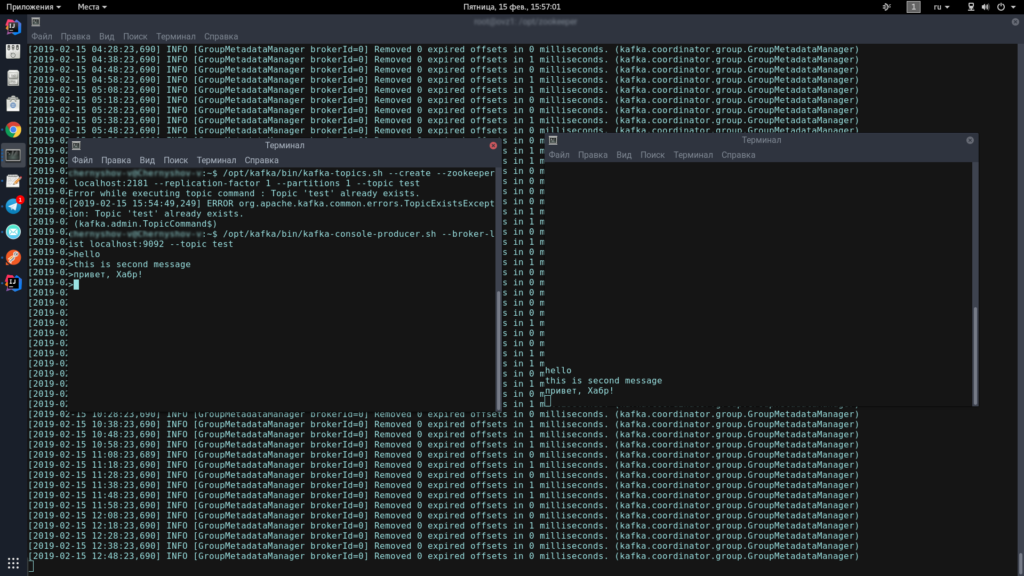

11. Test Kafka-server

To do this, we will open two terminals, on one we will launch the producer, on the other – the consumer.

In the first console, enter one line:

|

1 2 3 |

/opt/kafka/bin/kafka-topics.sh --create --zookeeper localhost: 2181 --replication-factor 1 --partitions 1 --topic test |

|

1 2 |

/opt/kafka/bin/kafka-console-producer.sh --broker-list localhost: 9092 --topic test |

This icon should appear, meaning that the producer is ready to spam messages:

>

In the second console, enter the command:

|

1 2 |

/opt/kafka/bin/kafka-console-consumer.sh --bootstrap-server localhost: 9092 --topic test --from-beginning |

Now, typing in the producer console, when you press Enter, it will appear in the consumer console.

If you see on the screen approximately the same as me – congratulations, the worst is over!

Now we just

have to write a couple of clients on Spring Boot that will communicate with

each other through Apache Kafka.

Related Posts

Leave a Reply Cancel reply

Service

Categories

- DEVELOPMENT (123)

- DEVOPS (54)

- FRAMEWORKS (46)

- IT (25)

- QA (14)

- SECURITY (15)

- SOFTWARE (13)

- UI/UX (6)

- Uncategorized (8)